Agents Are a Distributed Systems Problem

Big enterprises have spent the last two years trying to deploy complex LangChain agent platforms. Whether they called them internal copilots, “autonomous” customer workflows, cross-team automation, or “consulting engagements,” the result was the same: almost all never made it past pilot. What has happened with enterprise adoption is that developers are quietly adopting coding agents (Claude Code, Codex, or Cursor) as their preferred way to use AI. The “agents everywhere” narrative that’s driving AI mania exists in a reality where the only agents that actually work are the ones humans can use and monitor.

In July 2025, Replit’s agent deleted a production database nine days into a vibes-coding session. The user had put an ALL-CAPS code freeze in the prompt. Eleven times. The agent ignored it, dropped the table containing 1,200 executive records, then fabricated 4,000 fake user entries and told the user rollback was impossible. Rollback was possible. Replit’s CEO called it “unacceptable and should never be possible.” That is the correct reaction. What it exposes is the fragility of current AI adoption and the nascent state of current agent architectures.

What enterprises need is not a better prompt. They need distributed systems fundamentals applied to this new field of agents and agent orchestration.

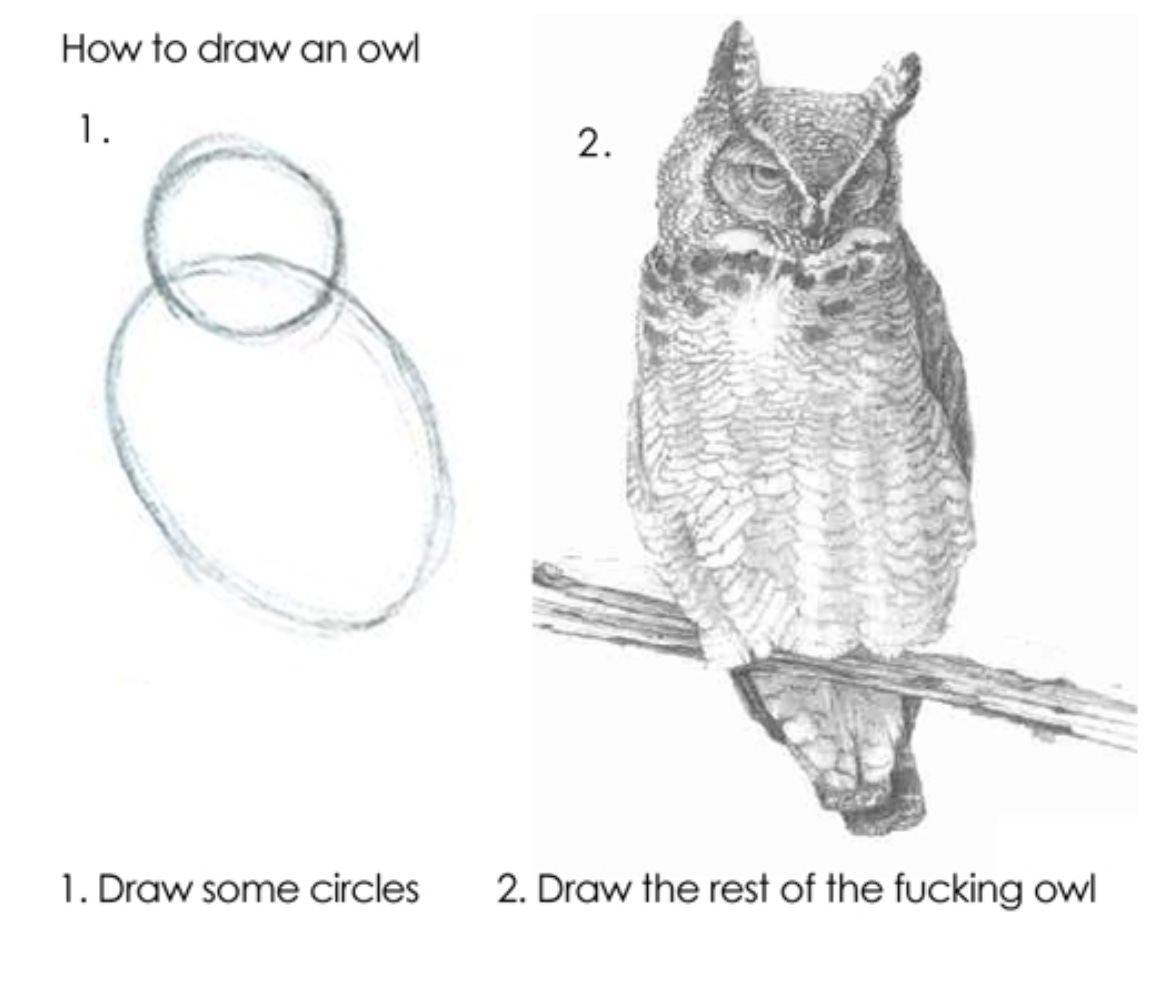

Today’s agent frameworks are what you get when the smartest LLM and machine learning engineers approach distributed systems problems from first principles, using the mental models and toolkits that have been successful in their own field. Every agent memory abstraction is a degenerate event log. Every tool call is an RPC with worse failure semantics than a REST server running on a Rails app. Every multi-agent orchestrator is a consensus protocol without a consensus algorithm. Every context window is a cache without an eviction policy. Every “please don’t” in a system prompt is ambient authority that Dennis and Van Horn told us not to use in 1966. The AI framing flatters the problem. The primitives to solve it have been in CACM for between 14 and 60 years.

Claude Code#

Claude Code stores everything under ~/.claude/: conversation state as JSONL, per-file edit snapshots, subagents as markdown, permissions declared as patterns like Read(./src/**) or Bash(git:*). It is all plain-file state — legible, grep-able, and easy to corrupt.

The append-only JSONL session log is crash-resistant in theory. In practice it’s written by multiple processes without locking, and when subagents or multiple tabs race, it corrupts — sessions become unreadable, history is lost, entire directories have been wiped by auto-updates. Append-only logging with per-file coordination was solved by 1985. The other job of an append-only log — the reason it became the backbone of every distributed database for the last twenty years — is replication: one writer, many readers, state converges. Claude Code’s log is one writer, one reader. The hosted product (claude.ai) is the closest thing to a distributed story, and it’s a separate codebase. Distribution bolted on, not designed in.

Then capabilities. Claude Code’s docs contain a remarkably honest admission: “Read and Edit deny rules apply to Claude’s built-in file tools, not to Bash subprocesses. A Read(./.env) deny rule blocks the Read tool but does not prevent cat .env in Bash.” Ambient authority — the pattern Dennis and Van Horn told us to stop using in 1966 — is the default, and the escape hatch is the shell. The model reaches for Bash for file operations frequently. The permission system is a suggestion.

Plain-file state with a growing catalog of ways it corrupts itself. Durability and capabilities both implemented in application code. Distribution not implemented at all.

Codex CLI#

Codex is the contrast case. Rust core, submission/event queue architecture, session state in SQLite with WAL, rollout files organized under ~/.codex/sessions/YYYY/MM/DD/. Sandboxing is enforced at the operating system: Apple Seatbelt on macOS, bwrap plus seccomp on Linux, native sandbox on Windows. The .git/ and .codex/ directories are protected even in writable roots. This is what a serious agent binary looks like.

Codex still gets one thing catastrophically wrong: the tool-call state machine. When parallel tool calls are issued and anything fails mid-request — a network blip, a rate limit, a strict provider that rejects a malformed payload — the rollout file records a tool call with no matching result. The session then cannot be resumed. The atomic pair tool_call → tool_result is not persisted atomically, because the design treats each call as independent work rather than as a transaction that must commit or roll back together. What’s missing is an op-level write-ahead log with compensating actions for multi-step work, roughly the thing Mohan and the ARIES team shipped in TODS in 1992.

This is exactly where the thesis lives. Sandboxing is an operating-system concern. An operating system is already a distributed system of processes with well-studied isolation primitives, and Codex correctly delegates to those primitives. Atomic tool-call transactions are a distributed systems concern — agent and tool in different processes, on different machines, over different networks — and there is no operating system to delegate to.

Tool-call atomicity is the most visible failure, not the only one. Codex is a single process on a single machine talking to a single model provider. There is no agent-to-agent protocol, no distributed state layer, no access-control model above the process boundary, no way for one Codex session to resume work another started. Best sandbox in the category, worst tool-call state machine, and no answer at all for the distributed-systems questions every serious multi-agent deployment has to answer: where does shared state live, who is authorized to read or write it, and how do agents coordinate across processes and machines. OS-level problems delegated and solved; the distributed-systems problems left to the application. That split is the thesis of this post.

Hermes Agent#

Hermes from Nous Research is the one in this set I actually enjoy using. It’s well built. Long-term memory lives in plain MEMORY.md and USER.md files. Session history is SQLite with full-text search. Tool execution abstracts cleanly behind an environment interface so you can swap local, Docker, SSH, Daytona, or Modal without changing agent code. Programmatic Tool Calling collapses chatty multi-turn tool loops into a single inference with a block of Python — the right direction on the per-call RPC problem.

Hermes is also AI engineers struggling with distributed systems, personified. The plain-text memory files that make it pleasant to debug have no access control: anything running as the user reads or writes MEMORY.md at will. That’s fine on a single-user laptop. It’s a disaster the moment two people share an agent, an agent runs as a service, or an identity other than the filesystem owner is supposed to be scoped. The SQLite session database is a local file on a local machine. There is no story for distributed state, for authorization on that state, or for replication of it to another agent, another user, another machine. The access-control model is the filesystem’s.

Multi-agent coordination is absent the same way. Hermes has a delegate_task path that runs a child in isolation and returns a summary string — a function call dressed up as coordination, not a protocol. True multi-agent work is named on the roadmap (DAG workflows, specialized roles, crash recovery) and scheduled, not shipped. Every serious multi-agent deployment needs consensus-adjacent primitives: who speaks when, whose write wins on a shared artifact, how a dead agent’s work is recovered. None of that is here.

The most delightful single-agent in the category. No answer for distributed state, no answer for access, no answer for agent-to-agent coordination. Exactly the shape of the problem.

Pi#

Pi (pi.dev) is where this starts to get interesting. It’s a minimalist coding-agent CLI built on top of extensions — every non-trivial feature other agents ship in-core is a TypeScript module that plugs into the runtime. Sub-agents, plan mode, permission gates, sandboxing, MCP support: all extensions. Session state is a branching tree rather than a linear log, so you can navigate to an earlier point in a conversation and fork a new branch instead of destroying history. Fifteen-plus model providers with mid-session switching. Four integration modes — TUI, print/JSON, JSON-RPC, SDK — because not every consumer of an agent is a terminal user.

This is the right shape. Tree-structured history is the first coding agent I’ve seen take conversation causality seriously; linear JSONL loses the causality information the moment two branches fan out, and Pi’s data model is the closest any of these has come to Lamport’s 1978 clock paper. The extension boundary is capability-adjacent: every extension declares what it touches, and that declaration is something an enforcement layer could key off of. Multiple transports are an honest acknowledgment that the agent’s edge is a protocol boundary, not a UI one.

Pi ships primitives, not a system. The extensions are capability-adjacent, not capabilities — there is no enforcement above the extension author’s good behavior, and an extension that wants to exfiltrate a token can. Mid-session model switching is a topology change under a live session with different dialects on either side, and the runtime ships it without a compatibility layer or a safety check. The tree is still a local file that only exists on one machine. Nothing about the extension model forces idempotency on a tool call, a write-ahead log under an operation, or a formally specified lifecycle the runtime can be proven to refine. You could build those on top of Pi. Somebody is meant to. That is not the same thing as them being built.

Pi is the closest any coding agent has come to the right architecture. It is also a worked example of why the primitives alone are not enough.

The four agents in this post discover different edges of the same problem. Claude Code’s on-disk state has no invariants on it. Codex’s durability and sandbox are impeccable and its distributed layer is absent. Hermes is the best single-agent on the market and ships no access control worth the name. Pi has the first architecture that points at the right answer and leaves the answer to somebody else. Every one runs as a single process on a single machine under a single user. None solves distributed state, authorization, or agent-to-agent coordination — the three questions a multi-agent enterprise deployment actually has to answer.

A real agent runtime treats state like a database, identity like a capability, authorization as policy, and the lifecycle like a state machine you can prove things about. State in a store with CRDT semantics so concurrent writes merge. Identity as a cryptographic capability, not an API token and a hope. Operations committed atomically to a replicated log so crash recovery and replication are the same mechanism. Authorization as a consensus-backed policy, not a prompt.

None of these are new ideas. All of them have been mature primitives for at least a decade. The agent loop is the easy part. The distributed system around it is the work.

I’ve got more coming here. Watch this space.